A framework for petrophysically and geologically guided geophysical inversion using a dynamic Gaussian mixture model prior

Abstract¶

We propose a new framework for incorporating petrophysical and geological information into voxel-based geophysical inversion. By developing the geophysical inverse problem from a probabilistic perspective, we redesign the objective function and the iteration steps as a suite of cyclic optimization problems in which three separate MAP optimization problems are solved using geophysical, petrophysical and geological data respectively. By quantitatively linking these data into a single framework, we recover a final inverted model that reproduces the observed, or desired, petrophysical and geological features while fitting the geophysical data. To achieve our goal we replace the Gaussian prior, used in the Tikhonov inversion approach, by a Gaussian mixture model. After each geophysical model update, the mixture parameters (means, variances and proportions) are determined by the geophysical model and the expected characteristics of the lithologies through another optimization process using the Expectation-Maximization algorithm. We then classify the model cells into rock units according to the petrophysical and geological information. These two additional steps over the petrophysical and geological data result in a dynamic update of the reference model and associated weights and guide the inversion towards reproducing the expected petrophysical and geological characteristics. The resulting geophysical objective function does not require extra terms to include the additional petrophysical and geological information; this is an important distinction between our work and previous frameworks that carry out joint geophysical and petrophysical data inversion. We highlight different capabilities of our methodology by inverting magnetotelluric and DC resistivity data in 1D and 2D respectively. Finally we apply our framework to inverting airborne frequency domain data, acquired in Australia, for the detection and characterization of saline contamination of freshwater.

1Introduction¶

Geophysical inversions are an essential tool for mapping the subsurface. However, the image of the underground retrieved from an inversion rarely benefits from the full range of knowledge because petrophysical relationships or expected geologic features are not easily incorporated into traditional geophysical inversions. Since the early 2000’s, there has been an increasing interest in including this information to obtain more realistic geological interpretations Linde et al., 2015Moorkamp et al., 2016. Various strategies have been implemented such as adding bound constraints and preferential trends Astic & Chouteau, 2019Lelièvre et al., 2009Li & Oldenburg, 2000Williams, 2008, parameterization of the expected shapes of the geological bodies Fullagar et al., 2008McMillan et al., 2015, inclusion of more complex non-geophysical data such as structural data Wu, 2017, reproducing petrophysical data Bosch et al., 2009Grana & Della Rossa, 2010Sun & Li, 2015Zhdanov & Lin, 2017 and training on geological images Lochbühler et al., 2015.

For the inclusion of petrophysical data, the most recent frameworks have focused on using clustering techniques such as the fuzzy C-means algorithm. This was first used in Paasche & Tronicke (2007) and later expanded in Lelièvre et al. (2012). This approach adds a clustering term to the objective function and allows a less strict relationship between the physical properties of interest. Further expansion of the method has been carried out by Sun & Li (2015)Sun & Li (2016)Sun & Li (2017). In addition to the fuzzy C-means clustering term, they added an iterative update to the cluster centers; this starts to introduce the notion of uncertainty for the petrophysical data. For linear problems, Grana et al. (2017) proposed a Bayesian formulation using a fixed Gaussian mixture model as prior, which allows them to estimate the posterior distribution and then to sample from that distribution. Giraud et al. (2017) on their side focused on reducing geological uncertainties by linking geophysical inversion and stochastic geological modeling through petrophysical information using a fixed Gaussian mixture model to model the petrophysical information. They modified the usual least-squares objective function of the geophysical inverse problem by adding a probabilistic term, computed from the prior petrophysical and geological distributions. To do this they required fixed, and thus very strong, priors for both the petrophysical and geological information. That information however is not often well-known and may exist only at a qualitative level.

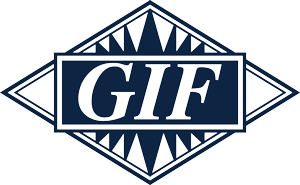

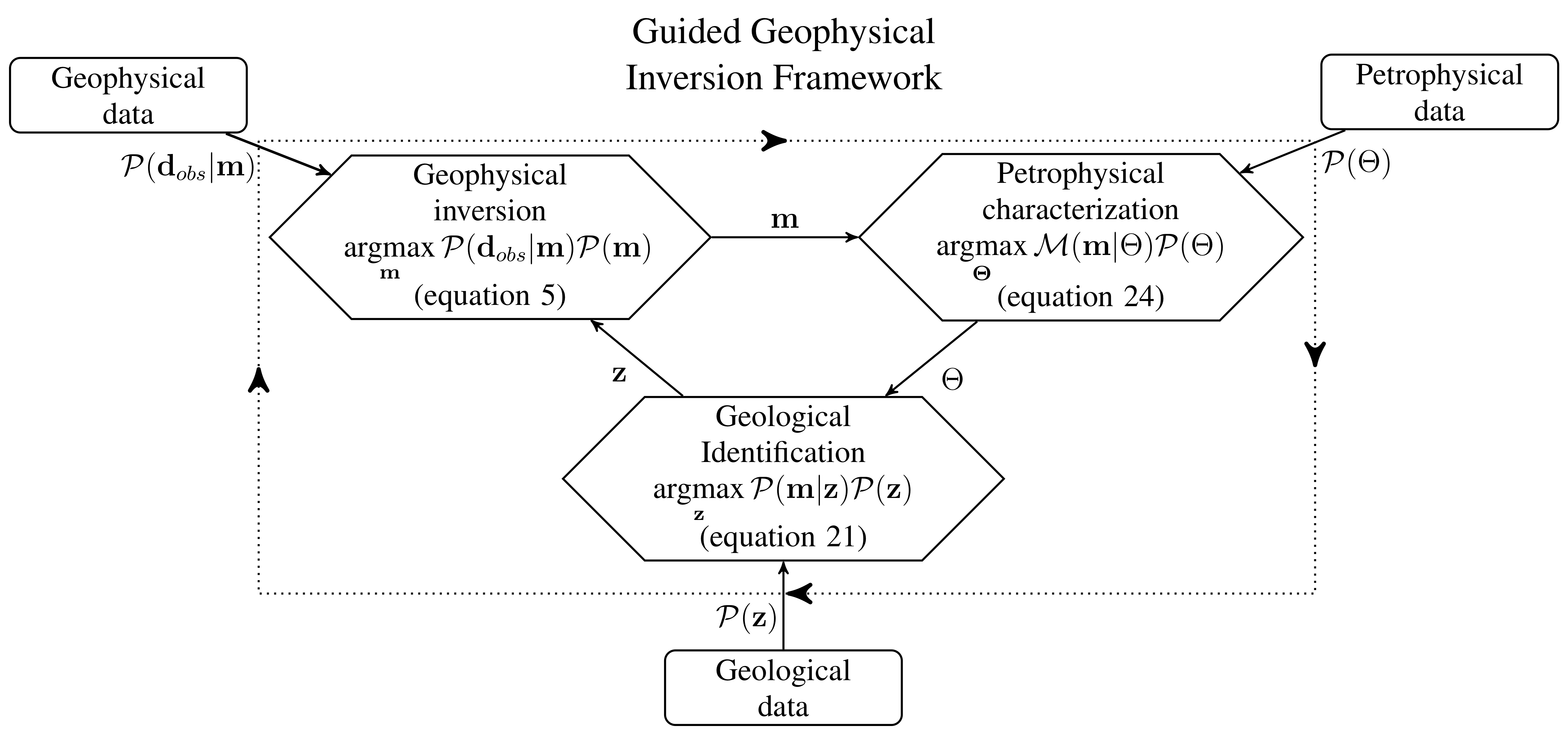

Figure 1:A graphical representation for our framework. Each diamond is a MAP estimation which requires data (shown in rectangular boxes) as well as input from the other MAP estimates.

This paper presents a new framework for petrophysically and geologically guided inversion (PGI) that generalizes concepts presented in Grana et al. (2017), Giraud et al. (2017) and Sun & Li (2015). We use a Gaussian mixture model (GMM) that represents our petrophysical and geological knowledge to regularize the geophysical inversion; this is analogous to the approach used by Grana et al. (2017). The idea of learning the physical properties mean values described in Sun & Li (2015) is formalized and extended to the variances and proportions of the GMM. At the same time we are able to include the geological information in the GMM in a similar way as did Giraud et al. (2017).

Our algorithm involves three optimization problems (Fig. 1, diamond-shape nodes) over the geophysical, petrophysical and geological knowledge, that are solved cyclically. Each optimization is cast within a Bayesian formulation as a MAP (Maximum A Posteriori) estimation over a posterior distribution. The framework is iterative and the geophysical model, the petrophysical characterization and the geological identification are updated through successive cycles. We show how petrophysical and geological information can be integrated into the smallness term of a conventional regularization operator in the geophysical inversion. Consequently we can achieve our objectives by carrying out a conventional deterministic geophysical inversion without adding extra terms in the regularization term. This is an important simplification since it eliminates the need for extra weighting parameters; it also allows previously developed Tikhonov-style inversion codes to be used. Our framework brings the petrophysical information to the same level as the geophysical information and allows us to generalize the concepts of uncertainties for the petrophysical data. This also allows us to define a formal target misfit for the petrophysical and geological information that is similar to the geophysical data target misfit; this concept has been missing from previous frameworks.

The paper proceeds in the following way. We first introduce the key concepts and vocabulary in section 2. We view the well-known Tikhonov inversion through a probabilistic lens to relate it to a MAP estimate of a posterior probability density distribution. This defines the geophysical inversion process displayed in our framework in Fig. 1 (process 1). The Gaussian mixture model (GMM) is then introduced as a way to represent geological and petrophysical information. Section 3 focuses on the mathematical description of the framework. We show how geological and petrophysical information can be linked in a voxel-based geophysical inversion through the smallness term by replacing the Gaussian prior in the Tikhonov approach with a Gaussian mixture model representing both the petrophysical and geological knowledge. The smallness term can thus be interpreted as a misfit for the petrophysical and geological data. This allows us to define a natural metric for determining an acceptable misfit. We also define the geological identification process (Fig. 1, process 3). Finally, to complete the framework definition, we present our approach to the dynamic petrophysical characterization (Fig. 1, process 2). Numerical solutions such as weighting strategy, convergence and pseudocode are presented in the section 4 which is dedicated to numerical implementation. In section 5 synthetic and field examples are used to illustrate some essential aspects of our algorithm. A 1D magnetotelluric (MT) example with both sharp and smooth features is used to illustrate the gain made in the recovery of a geophysical model when petrophysical information is available. We also use this example to step through the various stages of the algorithm. A 2D DC resistivity example is used to demonstrate our framework when petrophysical information is minimal; for instance when only an expected number of distinct units is expected. This example is also used to highlight the gain made by the inclusion of prior geological information. Finally we highlight how this framework can be used to incorporate constraints regarding the number of geological units and narrow down the domain of possible geophysical models. We use an airborne frequency domain electromagnetics (FDEM) field example, with data acquired in the Bookpurnong area in Australia, to invoke a geologic assumption about the number of units needed to characterize saltwater contamination. We conclude with a discussion about the key components of the PGI framework.

2Key concepts¶

In this section we first introduce the key concepts and vocabulary specific to a probabilistic formulation of the geophysical inverse problem. We will show that the Tikhonov objective function can be expressed in a Bayesian formulation, under the assumption of Gaussian priors, as a posterior probability density function. We then present our modelization choice, a Gaussian mixture model, for representing the petrophysical information.

2.1Tikhonov inversion and its probabilistic expression¶

The Tikhonov inversion Tikhonov & Arsenin, 1977 casts the inverse problem as an optimization problem in which an objective function Φ, such as shown in equation 1, is minimized. Using the same notation convention as Oldenburg & Li (2005), the goal is to find a solution that minimizes:

In equation 1, the vector is our geophysical model, which represents physical properties on a mesh. The term is the data misfit, is the model regularization function and β is a positive scalar that adjusts the relative weighting between the two terms. A value of β is sought so that the data misfit is below an acceptable target misfit Parker, 1977.

The geophysical data misfit is defined using a least-squares norm:

where is the forward modelling operator and are the observed data. The matrix contains the information about the data uncertainties but it is usually assumed that the data errors are uncorrelated and Gaussian. then becomes a diagonal matrix with elements where is the standard deviation for the datum.

The regularization term contends with the non-uniqueness of the inverse problem. Here we decompose it as:

where is the smallness term that enforces similarity between the model and a reference model . The smoothness terms are designed to penalize roughness of the geophysical model along the directions. The scalar parameters are weighting parameters for the different parts of the regularization function. An extensive interpretation and usage of the various parameters in this form of regularization can be found in Lelièvre et al. (2009) or in Williams (2008).

Both smallness and smoothness are important in our work but the smallness term will play a dynamic role. Our goal is to constrain the value that can be possibly taken by each cell of the model. The smallness term is thus the one of particular interest here (whereas the smoothness terms constrain the transition from one cell to another). We write the smallness term as:

where the matrix expresses a certain level of confidence in the reference model locally. At locations in the model domain where is large then differences between the recovered model and the reference model are highly penalized and vice versa.

The same objective function as in equation 1 can equivalently be expressed in a Bayesian formulation as a posterior probability density distribution Tarantola, 2005. A posterior distribution, such as defined by the Bayes rule, is proportional to a likelihood distribution , representing the data misfit, times a prior distribution (equation 5), normalized by the multiplicative constant :

with

The prior distribution represents the knowledge on the geophysical model such as a reference model, a certain level of smoothness etc. (equation 6). The model that maximizes this posterior distribution (equation 5) is called a MAP (Maximum A Posteriori) estimate; it is the same model that minimizes the objective function. In contrast, a model that only maximizes a likelihood distribution, a data misfit term with no prior, is usually referred to as a Maximum Likelihood Estimate (MLE).

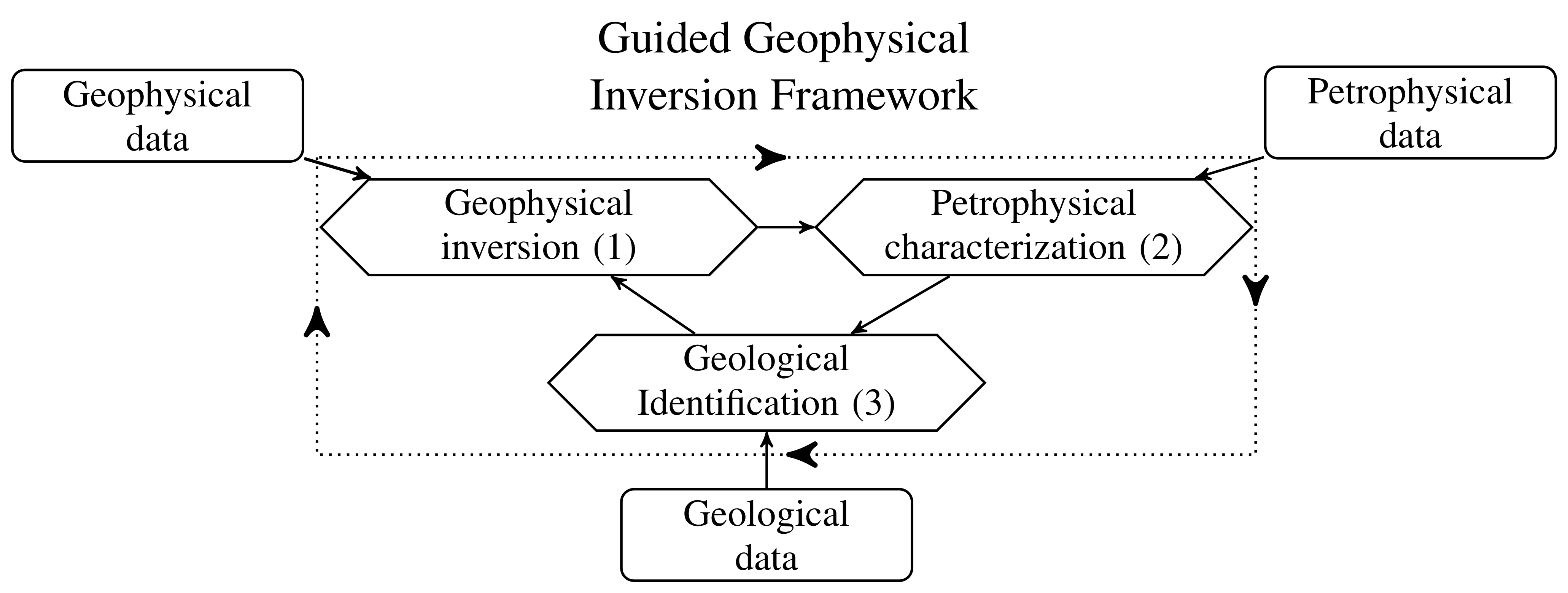

The objective function formulation is obtained by taking the negative natural logarithm of the posterior distribution. In a Tikhonov inversion, the data misfit as well as the priors are generally expressed as least-squares (equations 2 and 4). This translates into multivariate Gaussian distributions for the terms of the posterior distribution (see Fig. 2a for an unidimensional example). For a vector of parameters, the multivariate Gaussian distribution, denoted by , with mean and covariance Σ (denoted for a unidimensional Gaussian) is defined by:

From equation 7, we can see that choosing Gaussian distributions as priors and likelihood leads to a least-squares form of the terms in equation 1, through the application of a negative natural logarithm, as displayed in equations 2 or 4. This transformation is illustrated in Fig. 2. The summation of those terms shown in equations 1 is simply a consequence of the fundamental property of the logarithm function, the multiplications become additions. A detailed derivation can be found is appendix 1.

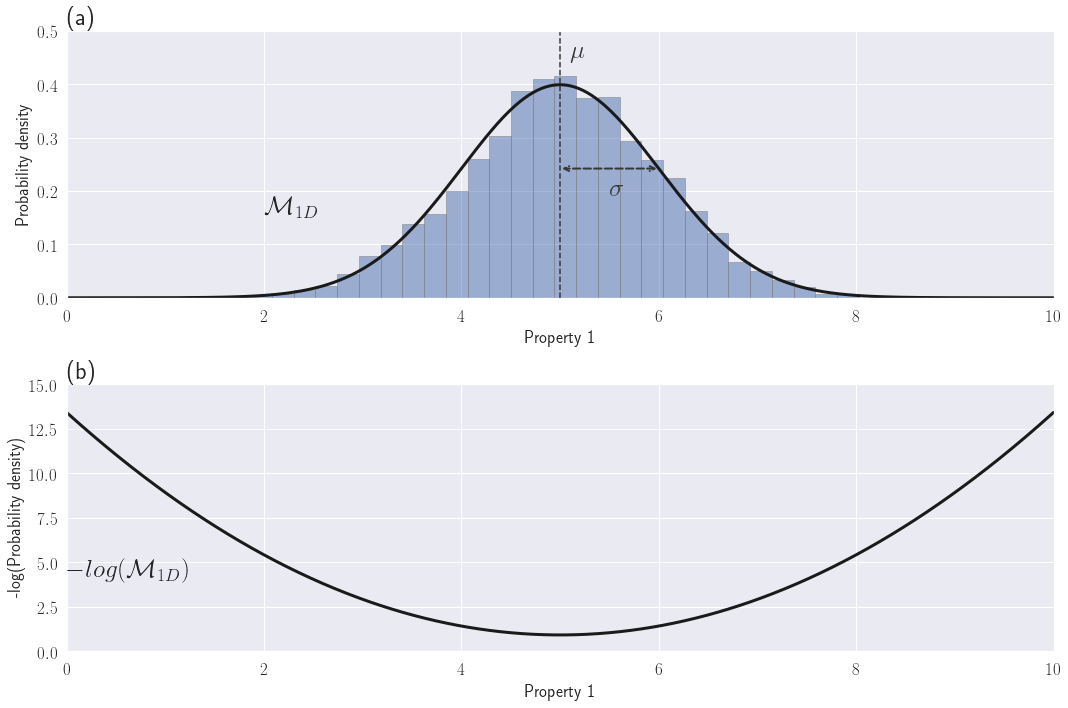

In this context, the prior distribution and the regularization are equivalent. The prior distribution is equivalent to the smallness term defined in 4. It can be expressed as a multivariate Gaussian distribution. Its mean is the reference model and its covariance is the weighting matrix . The factor expresses our confidence in this prior (equation 8). Increasing this confidence parameter is equivalent to reducing the variance around the reference model and thus the recovered solution becomes closer to the reference (such as illustrated in Fig. 3).

or equivalently:

Figure 2:(a) Examples of a Gaussian distribution in 1D for one physical property and (b) its quadratic negative-log equivalent

Figure 3:Gaussian distributions for various confidence parameters

Defining the smallness prior as a least-squares term such as in equation 4 thus assumes a Gaussian distribution of the model values around the reference model. This is unlikely to correspond to the true physical property distribution, especially when the initial model is a half-space as commonly used. With the Tikhonov approach, the incorporation of knowledge about the physical properties can only be done locally at fixed locations defined by the users Lelièvre et al., 2009. A more versatile method is required.

Our focus is on designing a more meaningful and dynamically updatable prior for the smallness term when non-geophysical data are available but not necessarily well located. This will allow us to guide the model towards producing an expected petrophysical distribution as well as capturing geological features. The inclusion of various types of information is done through the prior distribution. Our goal is thus to characterize each optimization process in Fig. 1 as a MAP estimate. We have addressed the geophysical inversion problem (Fig. 1, process 1). We now focus on the petrophysical characterization and geological identification.

2.2Gaussian mixture model representation of petrophysical information¶

To include physical property information into the inversion, we first need to define a way to model the physical property distributions. Our choice is to use a Gaussian mixture model, where each geological unit’s physical property is represented by a Gaussian distribution.

Consider that we are given a petrophysical dataset. We have samples, denoted , which represent the measured physical properties. For each sample we know its current geological classification. This is denoted by where is the number of distinct geological units. We can use the geological classification as a categorical variable. A sample belonging to unit is noted .

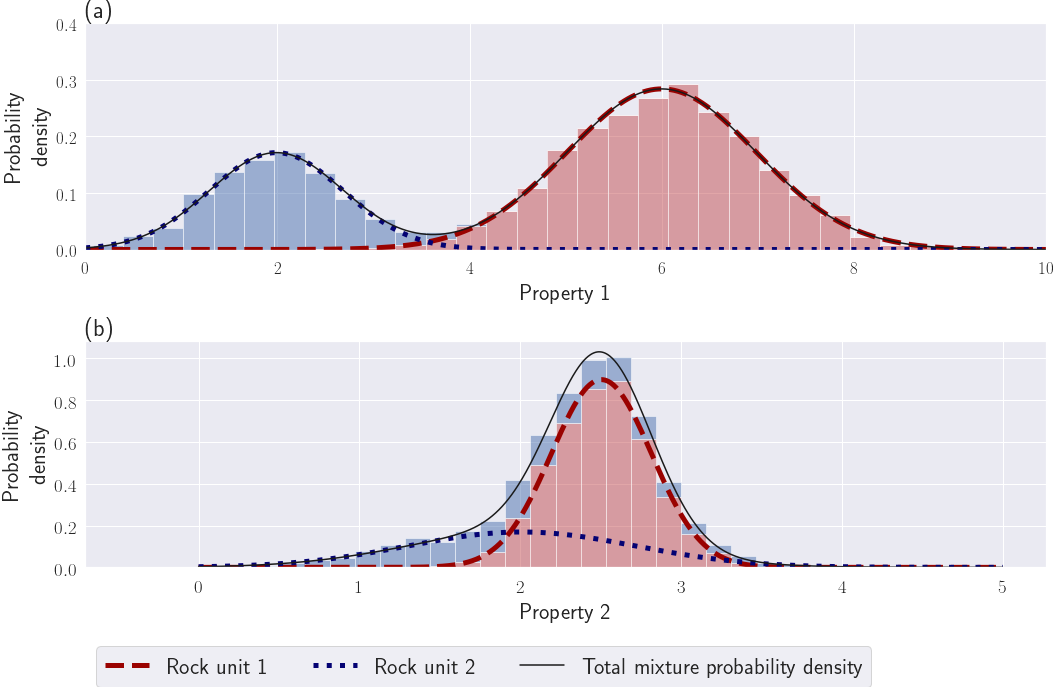

Figure 4:Examples of a Gaussian mixture like samples sets. (a) A GMM with two distinct units (the approximation in equation 20 is valid). (b) A GMM with overlapping units, the approximation in equation 20 is not valid.

For each geological unit , we can fit a Gaussian probability density distribution. If the unit does not follow a Gaussian distribution, we can often project the physical properties into a transformed space where it appears approximately Gaussian, such as a log-space for electrical conductivity or magnetic susceptibility (see the notion of mapping in the SimPEG framework as defined in Cockett et al. (2015)Kang et al. (2015)). With known labels, the MLE of the Gaussian distribution parameters for each unit , its mean and its variance (or standard-deviation ), plus its proportion among the dataset, are given in equations 10 to 12:

where the are our physical property measurements for each geological unit .

The full probability function to observe an unlabeled physical property data point can be written as a Gaussian mixture model (GMM) (see also Figs 4a and b):

The variable Θ holds the GMM global variables . A GMM is a parametric probability density distribution that can fit any continuous probability density distribution, when its number of clusters tends to infinity Murphy, 2012. It has gained popularity in recent years for representing geological and petrophysical information Giraud et al., 2017Grana & Della Rossa, 2010Grana et al., 2017Granek, 2011.

We denote as our categorical variable for the labels, i.e. our geological classification. We call this variable our membership. Given an unlabeled data point , its membership takes the value of the geological unit with the highest probability (see equation 14).

with:

Note that equation 14 defines a MAP estimate of the geological identifier . The parameters represent our prior expectation of observing a certain unit before observing (equation 15). This MAP estimate will be our template for designing the geological identification process in our framework (Fig. 1, process 3).

The only posterior distribution left to define is for the petrophysical characterization process. We wish to determine MAP estimates of the GMM parameters when labels are unknown (Fig. 1, process 2). This is done in section 3.2.

3Gaussian mixture model as smallness prior for geophysical inversion¶

3.1Gaussian mixture model prior definition¶

Suppose we are given petrophysical and geological information about the geophysical model that we want to recover. Petrophysical information can include mean or variance values of physical properties for different geological units. Geological information can consist of an expected number of distinct units or an anticipation of encountering certain rock units in particular locations. We need to design a prior that offers the maximum flexibility for representing that petrophysical and geological prior knowledge.

In equation 17 we propose a GMM that is designed to serve as a prior on , whose parameters are both spatially (index ) and lithologically (index ) dependent. Our probability function is:

where:

is the number of distinct rock units, or clusters.

is the number of active cells in the mesh.

represents our physical property value at the cell.

describes the membership to a certain rock unit.

represents our geological information. It is the prior probability of observing rock unit at location (vector notation: ). It can be either constant over the whole area, then representing an expected relative volume of each unit denoted by the global proportion , or locally set if the information is available (e.g borehole logs, outcrops or geological modeling (see Giraud et al. (2017))).

is the mean physical property of rock unit .

is the variance of the rock unit at the cell. It includes both the expected physical property variance of the rock unit globally and a local prior confidence that, at a certain cell , the model value should belong closer or farther away from the mean of the rock unit . Those weights can be used to include depth or sensitivity weighting.

Θ holds the GMM global variables .

Instead of adding an extra term in the objective function, we use the previously defined GMM probability distribution (equation 17), representing our current geological and petrophysical knowledge, as our smallness prior. To have consistency with the Tikhonov framework, such as that defined in equation 8, we write:

To formulate our objective function, we apply a negative logarithm to the new posterior distribution, which uses the GMM probability distribution as the smallness prior (equation 18). The resulting smallness term is then:

Although we have had positive results with using the above LogSumExp function in the smallness regularizer (the exponential function being in the Gaussian distribution ), there are benefits of using the following approximation. Gaussian distributions decay exponentially. If the units have distinguishable enough contrasts, it is reasonable to approximate the LogSumExp function in equation 19 by its locally dominant quadratic term. This means considering only the most probable rock unit for each cell given the current model . The other terms of the summation are deemed negligible. For example consider the 1D physical property distributions in Fig. 4. For the physical property distribution in Fig. 4(a), the individual Gaussian functions representing each rock unit can locally approximate the GMM extremely well. On the other hand, for the physical property distribution displayed in Fig. 4(b), there is too much overlap between the two individual Gaussian components. Individually they are not a good approximation to the full GMM distribution and the above assumption is incorrect. In that case, one can either work with the full expression shown in equation 19, or potentially re-evaluate whether the individual distributions are distinct enough to be considered as separate units. As a rule of thumb, contrasts are deemed distinguishable enough to apply this approximation if the centers of two clusters are separated by at least three times the largest standard deviation. By using the parameters of the most likely cluster at each active cell we can approximate as:

with:

Note the similarity of the smallness term in equation 20 with the Tikhonov formulation (equation 4). This allows us to generate a petrophysically and geologically guided inversion that uses traditional algorithms, and thus can rely on the research literature on the Tikhonov approach.

Our approach, regarding the regularized geophysical problem, can be summarized as follows: At each iteration, we first identify the most probable unit for each cell; we call it its membership, and store it in the categorical vector (equation 21). The reference model and smallness weights are then updated according to the membership . The reference model at each cell takes the physical property mean value of the most likely unit (equation 22). The smallness weights depend on the value of the corresponding variances and on our local weights (e.g depth or sensitivity weighting; see equation 23). These updates represent the newly acquired knowledge on according to the petrophysical distribution Θ and the geological information . We refer to the updated reference model as the learned reference model. The next inversion step then pushes each cell of the mesh towards the mean of its most probable rock unit. The strength of the push is proportional to the variance of the physical property for that unit (the higher the variance, the less we push), and our weights .

Equations 21 defines the MAP process for the geological identification (Fig. 1, process 3). Equations 22 and 23 link the petrophysical and geological information with the geophysical inversion.

One could proceed by using the means and variances fitted on the petrophysical data, as well as the proportions from the geological data, to compute , and at each iteration (equation 21, 22 and 23). However when this knowledge is incomplete or uncertain, our estimated GMM may not be accurate. Carter-McAuslan et al. (2015) have investigated that issue and shown that inaccurate petrophysical information can significantly affect the recovered model. Sun & Li (2015)Sun & Li (2016)Sun & Li (2017) partially overcome this by updating the cluster centers through the iteration process; they average the observed means in the geophysical model with the means from the petrophysical measurements through a separate clustering optimization problem. We generalize this approach to all of the parameters of the GMM. In the next section 3.2, we formulate the petrophysical characterization step of our framework (Fig. 1, process 2) as a MAP estimation process.

3.2Updating the Gaussian mixture model¶

One can either consider that the GMM parameters derived from petrophysical and geological data give the best estimation of the true distribution, or that it is only an approximation, and additional information can be obtained from the geophysical model during the inversion Sun & Li, 2015. We prefer this later approach. Since the prior knowledge might be incomplete or only known qualitatively, it is important to mitigate possible biases that can affect the final recovered model Carter-McAuslan et al., 2015. For that purpose Sun & Li (2015) updated each cluster center of their fuzzy C-means clusters through a weighted average of the expected center from the petrophysical data with the observed center in the geophysical model. From a statistical view point, this resembles the use of a MAP estimator to find the cluster means applied to the geophysical model itself, with the addition of a conjugate prior based on the petrophysical data. The notion of conjugate prior was first introduced in Raı̈ffa & Schlaifer (1961). By definition, a prior is called a conjugate prior to the likelihood function if the posterior belongs to the same family of probability distributions as the prior. For example the Gaussian distribution is self-conjugate. This means that if the likelihood follows a Gaussian distribution, choosing a Gaussian prior ensures that the posterior also belongs to the same family of probability density distributions. We use the conjugate prior approach to formalize the update of the means done in Sun & Li (2015) and generalize it to the other GMM parameters.

Obtaining the GMM parameters Θ is thus made part of the iterative inversion process. We define the petrophysical characterization process (Fig. 1, step 2) as a MAP estimator for Θ. This MAP estimate benefits both from the petrophysical and geological data known prior to the inversion and the current geophysical model. After each geophysical inversion iteration, and before updating the membership , we learn the mixture parameters Θ using the current geophysical model and our confidence in the prior knowledge . We define a posterior distribution on Θ, given the geophysical model and prior knowledge, as:

In section 2.2, the problem of fitting a single Gaussian distribution to each unit, and thus defining its petrophysical mean and variance, was made easy by knowing the geological unit of each sample. A much harder problem is to recover the full multimodal probability function described in equation 13 when there are missing labels. For example, in an inverted geophysical model , most locations for the geological units are unknown except where we have drillholes and outcrops. In fact, this is usually the information we are trying to determine. The categorical variable that represents our geological classification is now a hidden variable that we want to find along with the GMM parameters. Clustering algorithms are especially designed for this sort of task. The Expectation Maximization (EM) Algorithm Dempster et al., 1977 is one of the most widely used algorithms for semi-supervised and unsupervised learning in mixture modelling. It allows us to fit the parameters of a mixture model to a multimodal distribution with partial or no labeling. The EM algorithm in its original form is a MLE, thus no prior information is involved. To include prior information in the algorithm, and thus find a MAP estimate for the posterior distribution on Θ shown in equation 24, we use a MAP variation of the Expectation-Maximization algorithm (also introduced in Dempster et al. (1977)); we refer to this algorithm as the MAP-EM algorithm.

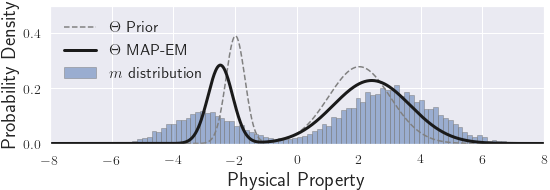

For the MAP-EM algorithm, we need to choose how to model the prior for each type of parameter. We can follow either a conjugate prior or semi-conjugate prior approach as described by Murphy (2012). Each step of the MAP-EM algorithm applied to a GMM with a conjugate or semi-conjugate prior can be understood as a weighted average of the observed parameters with their priors. Figure 5 shows an example of such a MAP estimate of the GMM parameters. We define the input of each prior function so that an input value of unity denotes an equal weighting of the observed and prior distributions. We refer to those input values as our confidences in the GMM prior parameters. Confidences of zero in the prior generate a MLE estimator; the prior parameters are not taken into account and the algorithm reverts to the normal EM algorithm. Infinite confidences in the prior fix the GMM’s parameters equal to their priors value.

Figure 5:Illustration of fitting a Gaussian mixture model with parameters Θ through the MAP-EM algorithm, given the geophysical model and the prior petrophysical distribution with all confidences set to unity

The MAP-EM algorithm is an iterative process. We initialize by starting with the parameters determined at the previous cycle. The usual stopping criteria defined in the literature for the MAP-EM algorithm is to define a minimum increase of the posterior probability density value. At each MAP-EM iteration , we first compute the responsibility of each cluster for each point . This is referred as the E-step in the EM algorithm and it stays unchanged in its MAP-EM variation:

We then compute the MAP-EM steps at iteration with respect to the global proportion weights , means and variances of the GMM. These steps are referred to as the M-step in the EM-algorithm. The MAP-EM variation M-step includes prior information as part of the iterative update.

The global proportion weights follow a categorical distribution whose conjugate prior is the Dirichlet distribution (equation 26):

At iteration , the resulting posterior estimate of , given and with confidence , is shown in equation 27:

with:

and

where is the volume of the cell and is the volume of the active mesh. The addition of these volume weights is necessary because the cells of our mesh can have different sizes. We want to use a prior information that is mesh-independent. That is why we use volumetric proportions instead of cell counts, which is the way the EM algorithm is usually implemented.

The full conjugate priors of the likelihood function in equation 24 for the means and variances are not independent of each other and they follow a Normal-Inverse-Gamma distribution Murphy, 2012. However using the full conjugate prior approach for the variances can be harmful in the first few iterations. Mean values from models at early iterations may be far from their final target values and that would drive the GMM estimated by MAP-EM to have very high variances (to compensate for the difference between the observed and prior means) and thus compromise the clustering (see Appendix 2 for further details).

To avoid this issue, a semi-conjugate prior approach is possible where the dependency of the means and variances is avoided by using the conditional distributions as priors. In this case the semi-conjugate prior for the means follows a Gaussian distribution (equation 30) while the semi-conjugate prior for the variances follows an Inverse-Gamma distribution (equation 31). As usually done in the statistics literature Murphy, 2012, we use an alternative parameterization of the Inverse-Gamma distribution called the Inverse Chi-Squared distribution. This re-parameterization allows for a simpler definition of the parameters. The semi-conjugate priors for the means and variances, with respective confidences and , are:

and:

Note that if we put prior information only on the means, or only on the variances, then this is a full conjugate prior. The semi-conjugate prior approach arises only when we put prior information on both means and variances (meaning that and are both non zero).

The update of the means is the same for both the full conjugate and semi-conjugate prior approaches. The current posterior estimate of the means is a weighted average of the MLE estimates from equation 11, using the geophysical model as the samples, with the prior means and with confidences . This update to the means is shown in equation 32:

with:

For the semi-conjugate prior, the current posterior estimate of the variances , given in equation 34, is a weighted average, with confidence , of the MLE estimations of the variances from equation 12 with their prior .

with:

We run fully the MAP-EM algorithm to learn a new petrophysical distribution with parameters after each iteration of the geophysical inversion process, using the current geophysical model and the prior petrophysical distribution .

This completes our derivation. Equation 24 in particular describes the MAP estimator for the petrophysical characterization. Our three MAP estimates are interconnected through the sharing of the geophysical model, petrophysical distribution and geological classification. The final framework is summarized in Fig. 6, which is the completed version of the previous diagram (Fig. 1). The next step is to numerically implement this procedure. That is done in the following section.

Figure 6:A graph approach illustrating how the various posterior distribution MAP estimate processes are interlocked with each other.

4Numerical Implementation¶

Our framework defines three inverse problems that are interconnected and special care is required to ensure that each is reasonably well solved. In this section we look at some of the essential implementation elements of this framework. A comprehensive algorithm and a flowchart can be found in appendix 3. However, before going into the details of the pseudocode, we formalize the concept of fitting the petrophysical and geological data and thus define a stopping criteria for our numerical solution.

4.1Petrophysical target misfit and inversion stopping criteria¶

The smallness term, such as defined in equation 20, measures how well the geophysical model fits the petrophysical and geological data through the GMM. We need to define a target for this misfit term.

For the definition and evaluation of the petrophysical target misfit, we focus on the petrophysical and geological information contained in the smallness term that is summarized in equations 36 to 39 and identified as .

with:

At each iteration, the element is a Gaussian random variable of mean zero and variance . Consequently elements in are Gaussian with zero mean and unit standard deviation. Thus is a chi-squared statistical parameter with expectation:

This defines the petrophysical target misfit value for as being half the number of active cells in the geophysical model (equation 40). As one condition for stopping our algorithm we want to find an for which is less than or equal to .

The equation 36 for evaluating the petrophysical stopping criterion omits the weights (equation 20). The additional weights are used to include depth or sensitivity weighting, which are more related to the physics of the survey rather than to the desired properties of the geophysical model. We have taken two approaches; the first is to minimize but keep the stopping criterion attached to . The second is to minimize and measure the stopping criterion using after adjusting the target misfit value to be . Thus far we prefer the former approach because the second has lead us in certain cases to underfit the petrophysical distribution. The examples, developed in sections 5, all use various weighting strategies and the stopping criterion approach.

We note this stopping criterion for the petrophysical data is analogous to the geophysical data misfit criterion. The geophysical data misfit term, such as defined in equation 2, is also a sum of Gaussian variables with zero mean and unit standard deviation. Thus a reasonable target misfit, such as introduced in Parker (1977), is:

where is the number of geophysical data.

Our algorithm stops when we have found a model that simultaneously has both and equal or below their respective target value and . Reaching multiple target misfits adds a new challenge to solving the inverse problem. We address this in the following section as we work through the important components of the pseudocode.

4.2Pseudocode and Weighting strategies¶

At each iteration of our PGI algorithm, three inverse problems need to be solved. Our algorithm is provided in two forms in appendix 3. The first is a pseudocode (algorithm 1), the second is a detailed flowchart that is linked to the pseudocode (Fig. 2). Here we discuss some of the important elements that assist in understanding the details of the algorithm.

4.2.1Step 1: Initialization¶

We are solving three MAP problems. Each needs to be initialized with an initial geophysical model , a petrophysical distribution and a membership respectively. We usually have these elements consistent with each other. For example, the initial reference model is usually the starting model. We can start from a background half-space , whose value is the same as in , and set at the background unit everywhere.

For the success of a geophysical inversion, the choice of the various weighting parameters can be critical. Our framework still uses a conventional geophysical inversion objective function, and hence previous studies and good practices are still applicable. We need to provide uncertainties for the geophysical data least-squares misfit term (equation 2). The objective function (equation 1) requires a reference model and initial weighting parameters. A common practice is to start with a large β and then decrease its value during the inversion iterative process Farquharson & Oldenburg, 2004. For the smallness and derivative weights , the similarity of our formulation with the Tikhonov approach allows us to rely on the existing literature (e.g Lelièvre et al. (2009)Oldenburg & Li (2005)Williams (2008)).

For the petrophysical inverse problem, we initialize the petrophysical distribution by setting . We thus need to design . For each unit, when petrophysical measurements or a priori estimates are available, both the mean and variance of the GMM cluster can be set. When no information is available, it is still required to provide some estimate for the variances. These prior variances can be thought of as petrophysical noise levels, analogous to the geophysical noise levels. They play an essential role in determining the petrophysical target misfit and regulate the clustering of each unit around its, to be determined, mean value. The proportions represent geological knowledge. When they are set globally, they represent the expected relative volume of each unit. The choice of confidences in the prior parameters is still a challenging question (see section 3.2 for definitions). When the petrophysical information is well-known, values of unity, or higher, have worked well. When no petrophysical information is known a priori, values of unity or above for the confidences in the prior variances are used, while confidences in the means and proportions are set to zero. In the examples section (section 5) we show examples for both the known and unknown petrophysical information cases.

The starting geological model can be determined by the starting geophysical model and petrophysical distribution. Additional local knowledge about the geology can be added through the choice of local proportion values for the GMM. We show a simple demonstration through the 2D resistivity example (section 5.2). For example, knowing that a unit appears at a certain location, can be added to the inversion. For that purpose we can locally set the proportion for this unit to unity, consequently giving a probability of zero for the other units at that same location.

This initialization completes step 1 in our pseudocode (algorithm 1) and flowchart (Fig. 2). The next step is to evaluate our stopping criteria and update the models.

4.2.2Step 2 - 5: Check convergence and update the model¶

At step 2, we check if the convergence criteria , and have been met. If these are not satisfied, then a model update is required. We start by taking a single Gauss-Newton step on the geophysical objective function Nocedal & Wright, 2006 (step 3). Step 4 consists in running the MAP-EM algorithm described in section 3.2 to learn a new petrophysical distribution parameters , using the updated geophysical model . Finally at step 5 we update the membership , using and according to equation 21. This updates the smallness weights and reference model for the next iteration, such as described in equation 22 and 23.

4.2.3Step 6: Updating β and ¶

In this step we adjust our weights so that the inversion arrives at a solution that acceptably fits both the geophysical data and the GMM containing the geological and petrophysical data. We have two adjustable parameters, β and . The parameter β balances the data misfit with the total regularization function, while allows us to adjust the importance of the smallness term.

Our current procedure uses a computationally inexpensive heuristic approach to find suitable β and parameters such that both geophysical and petrophysical misfits are equal or below their respective target value. We initially set β, and to values we would use for a standard inversion. We first invoke a β-cooling to get towards a solution that fits the geophysical data. Once reached, we focus more on fitting the petrophysical data by adjusting .

If the geophysical data misfit term reaches a plateau before attaining its target, we decrease β by a predetermined factor (step 6, first case scenario). We think of β as an outer-control parameter since it is a weighing for the entire regularization function, which includes smallness and gradient terms.

In our case we also want to find a solution that fits the petrophysical and geological data. For this we fine-tune the inner-control parameter . If the geophysical data misfit target has been reached, but not the petrophysical data misfit , we put more emphasis on the smallness model term. We increase by a factor (step 6, second case scenario). This is similar to the β-warming strategy described in Fournier (2015). Generally this allows us to focus the algorithm on reaching the petrophysical data misfit target without significantly altering the geophysical data misfit. If the geophysical data misfit is increased too much, then β is reduced. In summary, we use as a fine-tuning parameter as it is not adjusted until late stages of the inversion when the major structures have already been identified.

4.2.4Step 7: Final output¶

The algorithm stops when both the geophysical and petrophysical misfits are below or equal to their respective targets. The PGI framework outputs three quantities: the recovered geophysical model, the learned petrophysical distribution and membership. The geophysical model fits both the geophysical data and the learned petrophysical distribution. The membership attributes a rock unit to each cell; we refer to this categorized model as a quasi-geological model, as proposed by Li et al. (2019).

4.2.5Options¶

We have the possibility to include, or not, the learned reference model inside the smoothness components of the objective function. Our preferred strategy is to leave it out until we have reached the geophysical data misfit target and when only a small fraction of the membership is changing. This avoids the creation of interfaces at locations that are likely to change later in the inversion. Once the learned reference model has stabilized we can then incorporate the reference model into the smoothness term. It promotes the creation of interfaces, which also help to reach the smallness target misfit if it has not yet happened. This strategy and its effects are illustrated in the DC example (section 5.2).

4.3Convergence¶

At each iteration, the gradient-step for the geophysical objective function is similar to that in the Tikhonov formulation. This step is guaranteed to be a descent direction for the objective function. The objective function to be minimized, however, changes because the solution of the MAP-EM problem changes the reference model and smallness weights. Fortunately the MAP-EM algorithm guarantees a decrease of the smallness term at each iteration. In other words the updated petrophysical distribution is guaranteed to be closer to the current geophysical model than the previous distribution.

Thanks to these properties, our algorithm usually reaches the geophysical target misfit at a rate comparable to the Tikhonov approach, and then requires only a few additional iterations to reach the petrophysical target misfit. Each PGI example presented in the next section 5 runs in a number of iterations comparable to the Tikhonov inversion while reaching both of our stopping criteria. This is a significant result as this means applying this framework does not necessarily end in a massive loss of efficiency.

5Examples¶

In this section we present two synthetic examples and a field example that illuminate important aspects of our framework. These examples are available as part of the SimPEG package on Github at https://

The first example is a non-linear inverse problem in electromagnetics. Magnetotelluric data Ward & Hohmann, 1988 are acquired over a layered-earth that has sharp and smooth features. We are provided with the true petrophysical distribution and the goal is to use it, along with the MT data, to find a solution that has the desired features. We present the results at the various steps of the algorithm and display the updates done to the initial petrophysical and geological models that are used to guide the geophysical inversion.

In the second example, a DC resistivity profile Ward & Hohmann, 1988 is acquired over two cylinders. We demonstrate how this framework performs when the GMM is not known a priori. We also use this example to show how prior geological information can be included into the inversion.

The third example is an airborne Frequency-Domain Electromagnetics (FDEM) survey Ward & Hohmann, 1988 over a floodplain in Australia potentially contaminated by saline water. We highlight the influence of the choice of a reference model on the Tikhonov models. We then illustrate how the PGI approach can reduce ambiguity in the recovered features. This is achieved by constructing models in which the earth is assumed to have a certain number of distinct units. This sort of questions (what if there are units?), crucial for interpretation, are otherwise difficult to ask in a traditional Tikhonov setting.

5.1MT1D, layered and smooth Earth model: Demonstration of the framework¶

In this example, we demonstrate how to use the PGI framework to include petrophysical information. We first highlight the gains made in the recovered geophysical model. We then provide details about how the PGI iterations proceed.

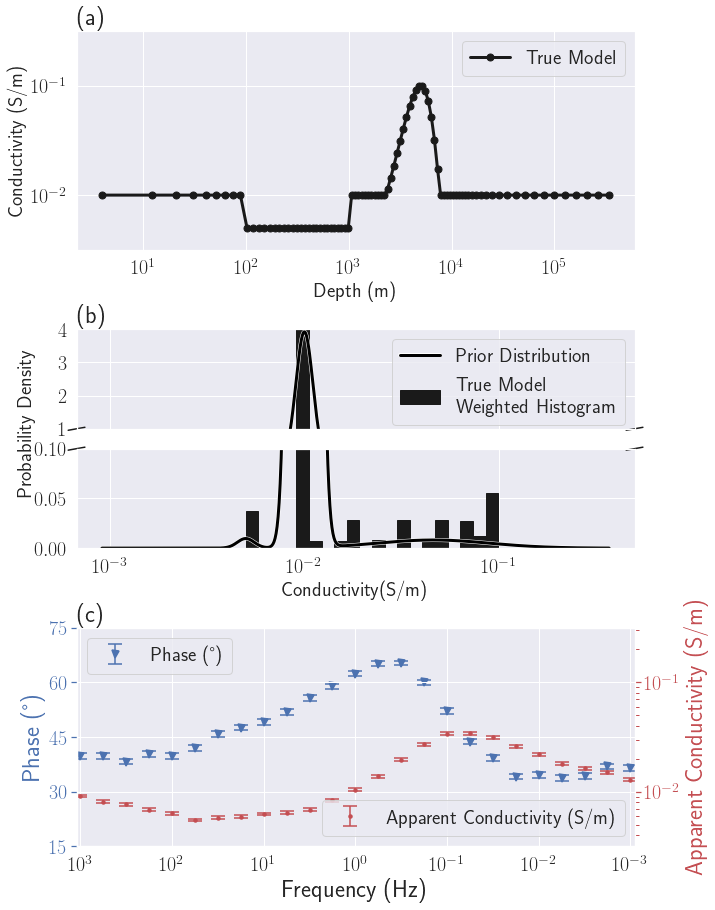

Figure 7:Setup of the MT1D example: (a) True geophysical model . The dots coincide with the cell centers in the discretized model. (b) True model weighted histogram and prior petrophysical distribution ; (c) MT data and uncertainties.

5.1.1Setup¶

For this example, inspired by Kang et al. (2017), we want to recover a 1D earth model that is made up a resistive layer and a smoothly varying unit embedded in a uniform background. The conductivity model, which is discretized onto a mesh with 89 cells, is shown in Fig. %s(a). The background unit has a conductivity of (). The resistive unit ( or ) is thick and starts at a depth of . The smooth conductor, with a maximum conductivity of (), is located between and depth. A log-scale, which is traditional for MT, is used.

In the MT experiment the electric and magnetic fields recorded at the earth’s surface are combined to generate impedances. In this example, data are collected at 25 logarithmically spaced frequencies between 10-3 and 103 Hz. This generates 50 data composed of the real and imaginary components of the impedance for each frequency. Unbiased Gaussian noise with a standard-deviation of of the data value is added. We represent those observed data in the form of apparent conductivity and phase in Fig. %s(c).

5.1.2Initialization¶

We first describe our initialization for both the Tikhonov and PGI algorithms. For the initial geophysical model , we choose a uniform half-space with the same conductivity as the background unit. For the PGI approach the starting membership is set to the background unit everywhere. Both the Tikhonov and PGI approaches start with the same objective function; the models after the first iteration are thus identical. This helps for comparing results between the two approaches.

For the PGI approach we also need to provide a GMM to describe our prior petrophysical and geological information . We use the exact information from the true earth model for the means and global proportions. For the variances, we use the true variance for the smooth unit. This unit is characterized by a wide distribution and thus it has a large variance. The background and the resistive unit are characterized by single values so their true variances are zero (Dirac distribution). We assign a standard deviation of (or variance of ) as an acceptable level of petrophysical noise for those two units. This prior petrophysical distribution can be visualized in Fig. %s(b). All the parameters were estimated by taking into account the "volume" of the cells. This is necessary to make the GMM parameters independent of the discretization. The histogram in Fig. %s(b) and all subsequent take into account the cell volumes and are thus referred to as weighted histograms.

We set all confidences in the prior petrophysical distribution to unity. Unity confidence parameters mean we have equal trust in the geophysical model and in the prior information when computing the new GMM parameters at each iteration of the petrophysical characterization. Each new estimate of the parameters, at each iteration of the MAP-EM algorithm (equations 27, 32 and 34), is thus a simple unweighted average of the observed and priors. This allows us to see how well each parameter of the GMM is recovered without forcing or fixing its value in the inversion.

5.1.3Comparison of the Tikhonov and PGI results¶

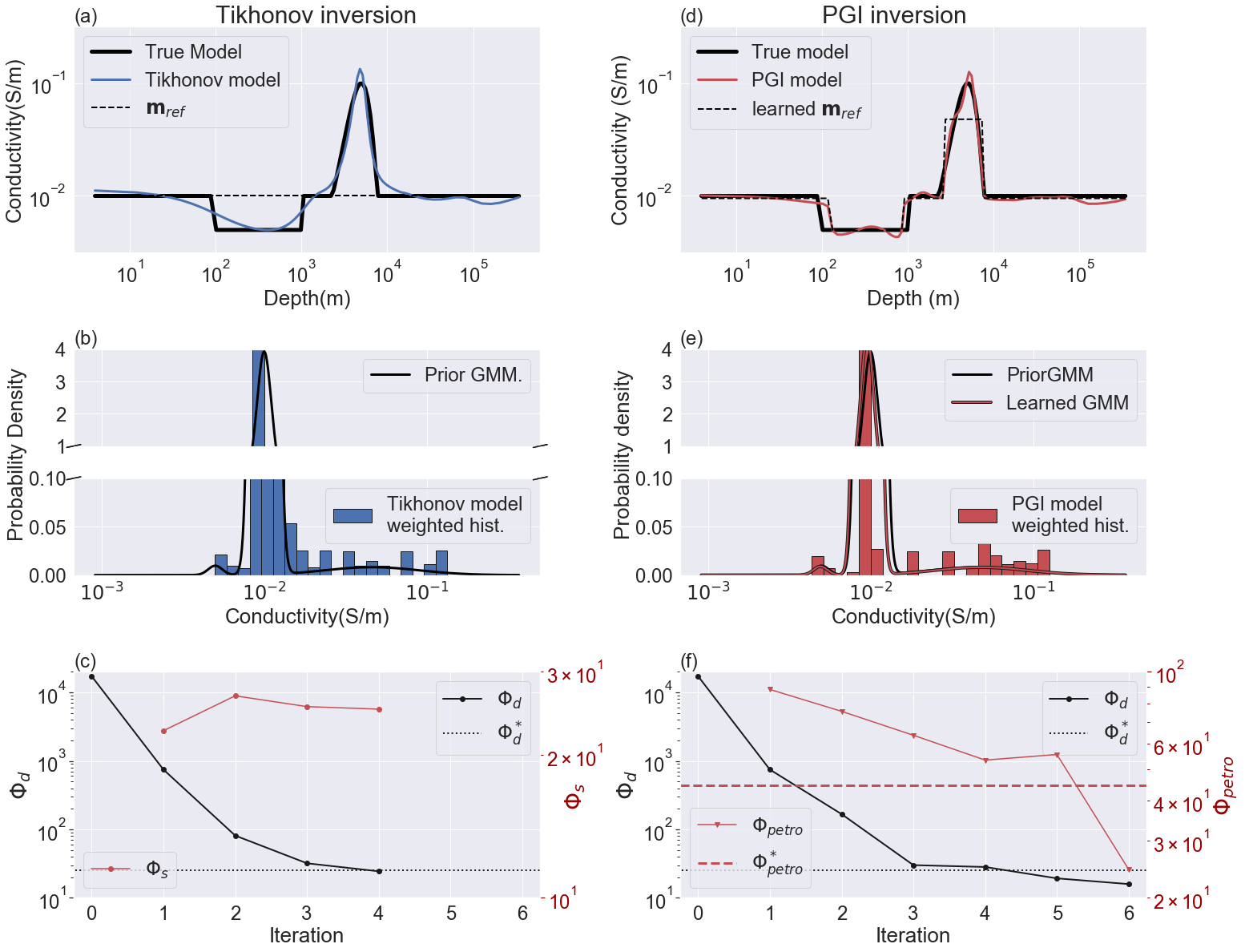

Figure 8:MT1D example results and comparison: Figs (a), (b) and (c) show the recovered geophysical model, its histogram and the convergence curves respectively for the Tikhonov approach. Figs (d), (e) (f) shows the same plots for the PGI approach.

The results of the Tikhonov and PGI algorithms are shown in Fig. 8. The Tikhonov model is presented in Fig. 8(a) and the PGI model is shown in Fig. 8(d). The Tikhonov inversion is smooth everywhere, but character of units blockiness or smoothness are much better recovered in the PGI model. Note that both algorithms started from the same reference model. This reference model is kept constant in the Tikhonov approach whereas the PGI algorithm updates it, along with the smallness weights, at each iteration.

The PGI recovered petrophysical distribution (Fig. 8e) is also much closer to the prior and true distributions, compared to the Tikhonov result (Fig. 8b). The means, variances and proportions of each unit are well recovered. Despite the fact that we used only confidences of unity in the prior GMM parameters, we were able to recover a learned petrophysical distribution that was close to the true distribution. The recovered variance of the background is (). This is less than our prior value of . This means that we recovered a background conductivity with a smaller variance than the one prescribed by the prior.

In addition to being closer to the ground truth, our inversion procedure converges in a similar manner as the Tikhonov approach (Figs 8c and f). The PGI method took only two additional iterations, compared to the Tikhonov approach, to reach the geophysical and petrophysical target misfits (shown respectively in black and red in Fig. 8f). They are reached respectively at the fifth and sixth iterations. Those two Figs 8(c) and (f) also highlight that the smallness is now a term to be minimized in the PGI. In Tikhonov we are unconcerned about the value of and it usually increases when β decreases. In PGI, measures how well the petrophysical and geological information are recovered.

5.1.4Step-by-step of the PGI iterations¶

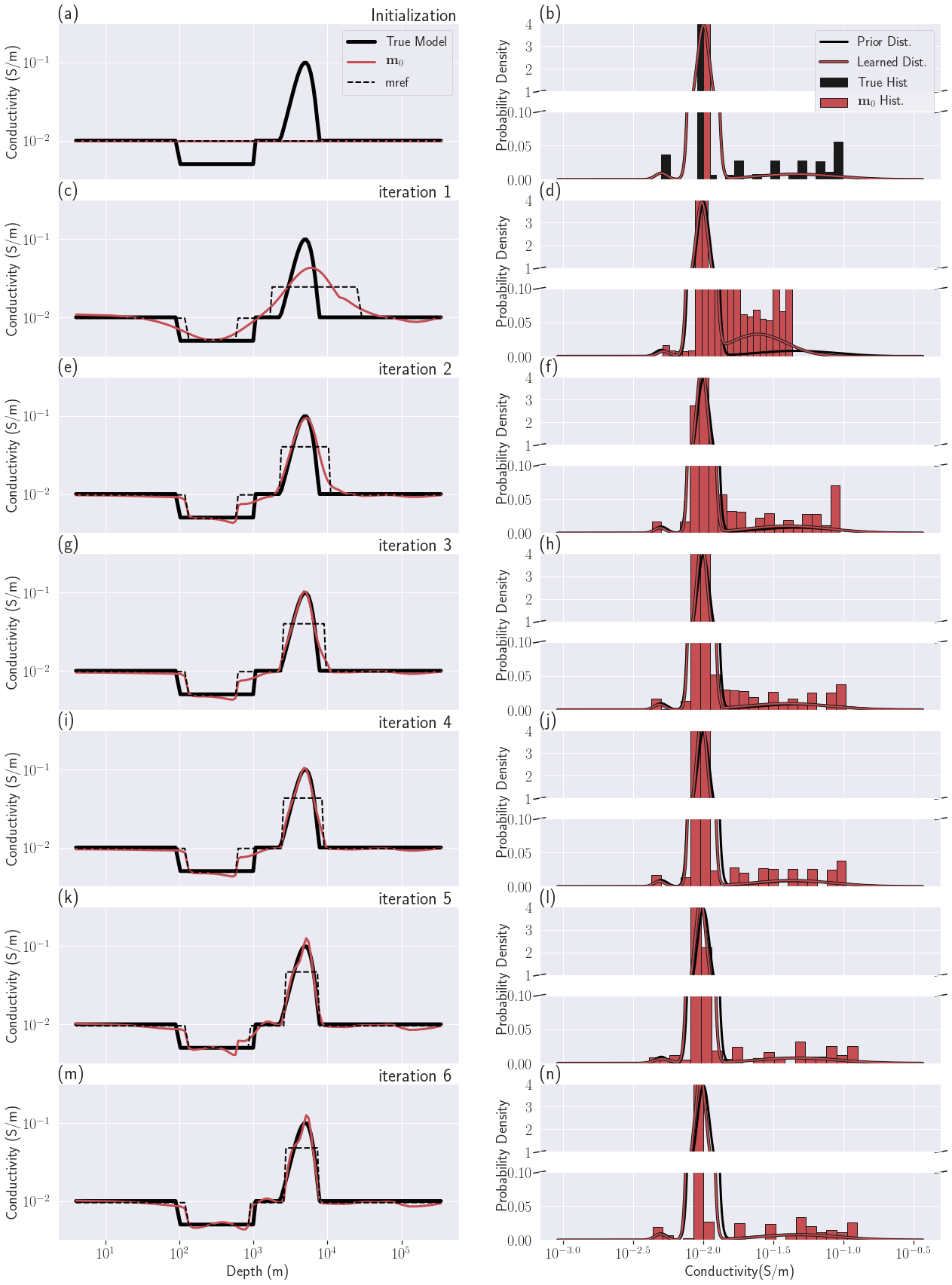

Figure 9:Iterations of the PGI MT1D example

The iterations of the PGI are shown in Fig. 9. Figs 9(a) and (b) show our initialization. The initial and reference model is a half-space with a conductivity equal to the background conductivity. The prior and initial petrophysical distribution was described in the initialization section for this example. The first iteration (Figs 9c and d) is the same as the first Tikhonov iteration and the current recovered geophysical model is fairly smooth. In the Tikhonov inversion, the reference model remains the same throughout the inversion but in the PGI, the reference model and smallness weights are updated at the end of each iteration. The updated reference model is determined from the learned petrophysical distribution applied to the current geophysical model, as described in equations 21 to 23. The recovered petrophysical distribution at this first iteration already distinguishes three units but displays higher variances than the prior. The conductivity contrasts are underestimated but the overall geological classification is correct. The recovered geophysical model after the second step (Figs 9e and f), with the updated reference model and smallness weights from the previous step, starts to display the desired features both spatially and in its physical property distribution; we see a sharper resistive unit and smoother conductive unit. The geophysical target misfit is reached at iteration 5 (Fig. 9k and l) and the parameter is increased. In one further step (Figs 9m and n) both the geophysical and petrophysical target misfits are reached. The last iteration successfully clustered the model values while conserving . The sharp contrast of the resistive unit is well recovered as well as the smoothness of the conductive unit. The learned petrophysical distribution is very close to the prior distribution. It also has a lower variance for the background unit compared to its prior value, and thus is closer to the truth.

5.2DC2D, buried cylinders: Working with missing petrophysical information and adding geological information¶

In this example we illustrate the performance of our framework when no physical property mean values are available, and compare it to the result when full petrophysical information is available. We then show how geological information, such as depth and thickness, can be incorporated.

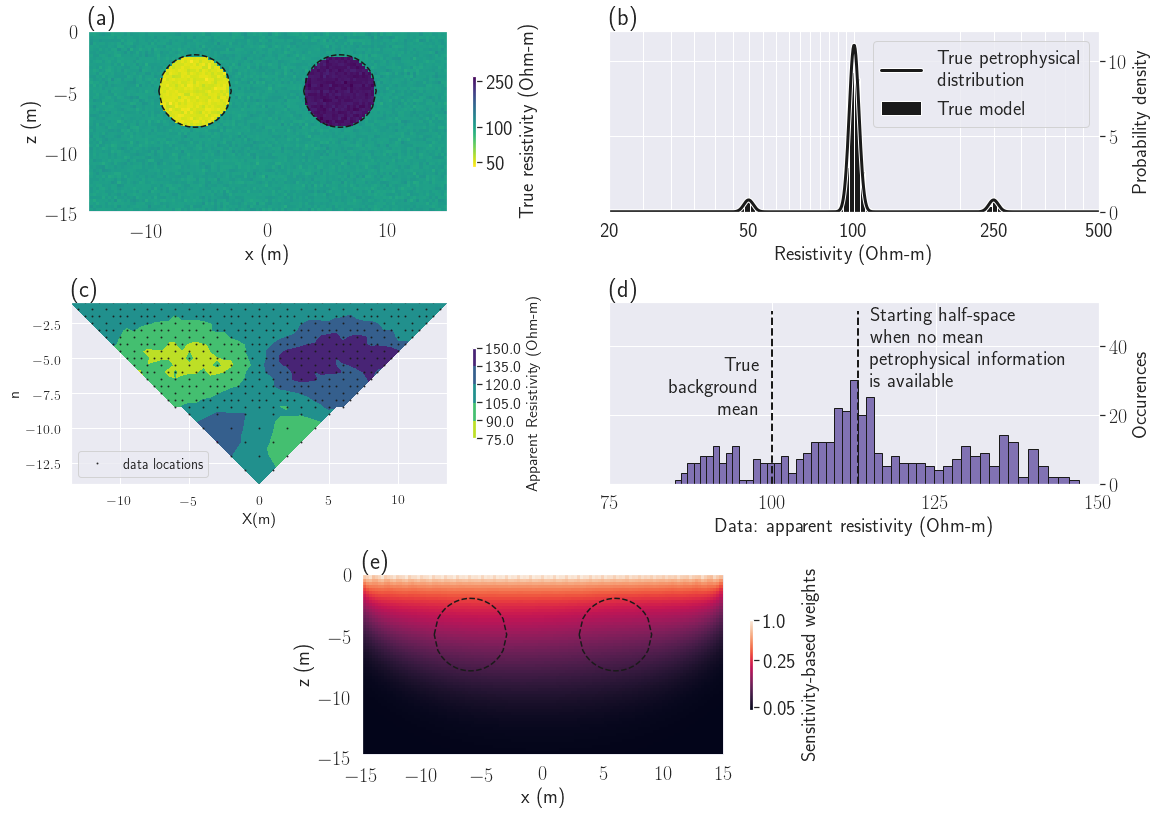

Figure 10:Setup of the DC resistivity example. (a): True conductivity. (b): True petrophysical distribution. (c): DC data to be inverted. (d): Histogram of the measured apparent resistivity. The best fitting half-space () is indicated by the vertical dashed line. (e): Sensitivity weights used for all inversions.

5.2.1Setup¶

We apply our procedure to a 2.5D DC resistivity problem (2D geology but 3D sources) to recover two cylindrical units, one conductive (with a mean resistivity of ), one resistive (with a mean resistivity of ) embedded in a background unit with a mean resistivity of (see Fig. 10a). To make the model slightly more geologically realistic, we added Gaussian noise to the log-conductivity model. The noise had zero mean and a variance of (see Fig. 10b).

Our survey is a dipole-dipole with electrode separation of and (Fig. 10c). The maximum dipole separation is set to . We simulate a total of 419 resistance measurements. Unbiased Gaussian noise, with a standard-deviation of of the original value, is added to the forwarded geophysical data. A histogram of the apparent resistivity data is shown in Fig. 10(d).

For the inversion we limit our active cells to the region covered by the survey data as shown in Fig. 10(a). The active mesh is composed of 7021 cells. For the geophysical data misfit uncertainties, we used a floor value of in addition to the noise standard-deviation.

For all of the inversions we used a sensitivity weighting that is similar to that developed in Mehanee et al. (2005). This is used in place of the usual smoothing weights at the surface to compensate for the high sensitivities near electrodes. Those weights are shown in Fig. 10(e). We use and as stopping criteria. To illustrate functionality, we also incorporated the learned reference model in the smoothness term of the geophysical objective function. This was done towards the end of the inversion, after the reference model was stabilized and was reached (see algorithm 1, step 6).

To set benchmarks for the inversion results, we first carry out a Tikhonov inversion and a PGI with full petrophysical information. We then run a PGI without providing any information about the physical property mean values or the proportions. We finally run another PGI, still without means information, but with added geological information included through the use of local proportion weights in the GMM. All inversions start with the same geophysical objective function.

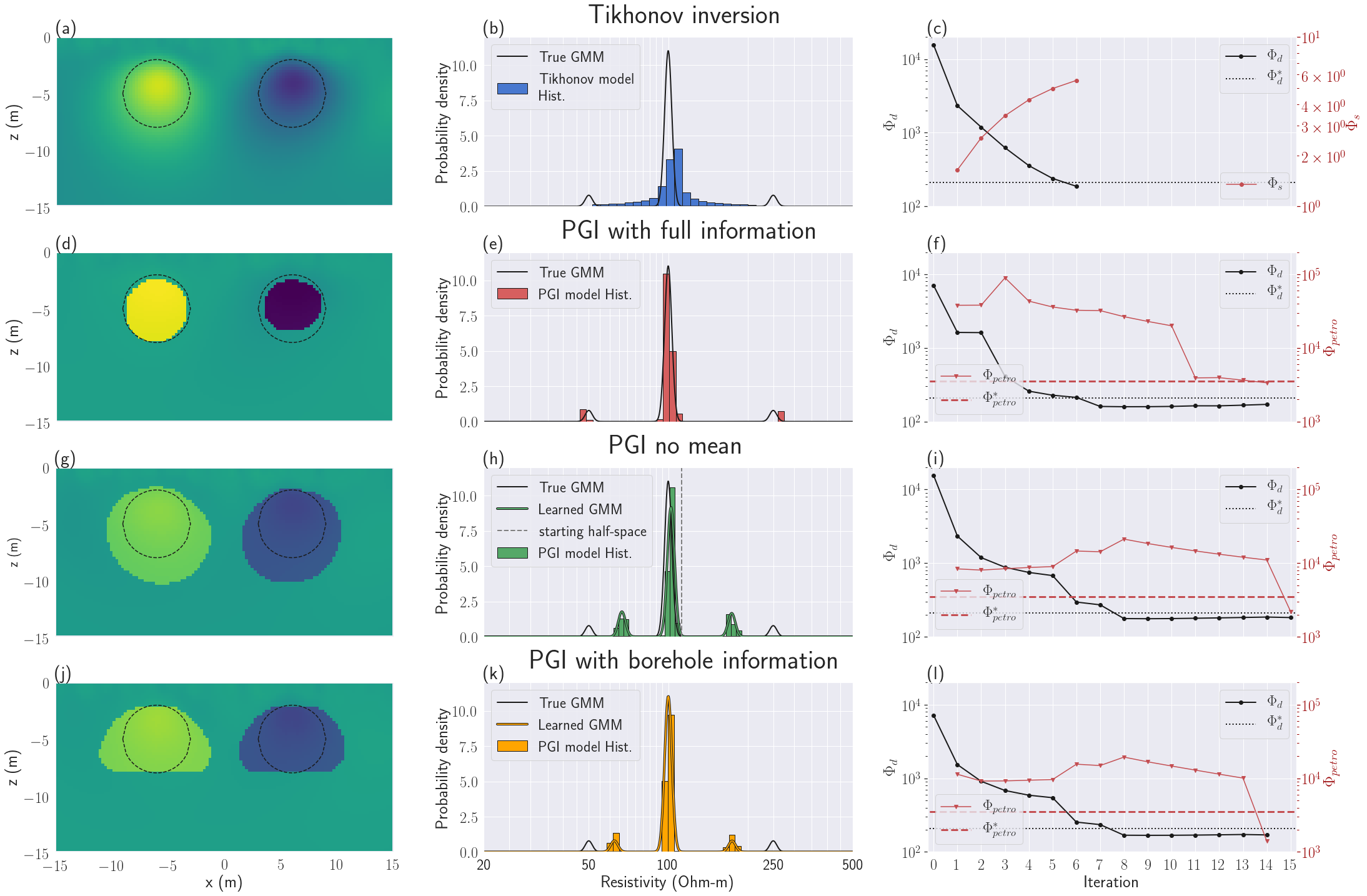

Figure 11:DC inversion results. The color scale is the same as for the true model in Fig. 10(a). Figs (a), (b) and (c) present the recovered model, histogram and convergence curves respectively for the Tikhonov approach. Figs (d), (e) and (f) show the same plots for PGI, when the true petrophysical distribution was provided. Figs (g), (h) and (i) show the results for PGI, when no information on the proportions or the physical property means of the units were provided. Figs (j), (k) and (l) show how PGI, with no mean information, can be improved by adding information about the depth and thickness of the cylinders.

5.2.2Tikhonov inversion¶

The Tikhonov inversion starts from the best fitting half-space of , instead of the true background value of (see Fig. 10d). The inversion produces the result presented in Fig. 11(a). The two bodies are detected but their edges are smoothed. This smoothness is also visible in the histogram of the model that is characterized by continuous values centered on the starting half-space value (Fig. 11b). The conductivity values do not attain the true electrical conductivity of the anomalies. The geophysical target misfit is reached in six iterations (Fig. 11c). The parameter β was cooled at each iteration and the smallness term kept increasing; this is expected in the Tikhonov approach.

5.2.3PGI with full petrophysical information¶

In this example we first want to establish a benchmark result by using the true petrophysical distribution with global proportions. We fix the petrophysical distribution at the true one (Fig. 10b) by setting all our confidences in the petrophysical prior to infinity. Thus is fixed for all iterations. We start from a half-space with the true mean background value.

This benchmark inversion recovers the two cylinders (Fig. 11d) quite well. The locations of the cylinders, their outer boundaries and their conductivities are in reasonable agreement with the true model. The geophysical model also satisfactorily fits the true petrophysical distribution being imposed (Fig. 11e). The geophysical target misfit is reached after seven iterations (Fig. 11f). An additional seven iterations is needed to reach the petrophysical target misfit, while keeping . The two increases of seen at iteration 3 and 7 correspond to β-cooling. This was necessary since the geophysical misfit was not decreasing enough (see algorithm 1, step 6). The important decrease seen at iteration 11 corresponds to the inclusion of the learned reference model in the smoothness. This helps us reach the petrophysical target misfit. This highlights the importance of incorporating a discontinuous reference model into the smoothness terms so that large gradients in the model are not penalized. This allowed for the final model to have sharp boundaries which is consistent with the true model.

5.2.4PGI with no physical property means information¶

We now consider the situation where no prior information is known, except the expected number of units (three in this example). We can still use our framework but we turn off the prior on the means and proportions. The confidences are thus set to zero (no prior information on the means or proportions). We used the true variances with infinite confidences in them. We thus have specified the petrophysical noise levels, which regulate how much each unit has to cluster around its unknown mean. This is analogous to choosing an appropriate geophysical data noise level. Our inversion starts from the best fitting half-space, as in the Tikhonov inversion, since the true background value is now considered to be unknown.

The recovered geophysical model still displays a clustered aspect with structures close to the ground truth (Fig. 11g). The volumes of the two cylindrical units are overestimated. The histogram and model show three distinct units. Without the true means information, the recovered means of the cylinders are slightly shifted towards the background value (Fig. 11h). Interestingly the recovered mean of the background is closer to the true mean than the initial half-space model. The number of iterations to reach both target misfits is comparable to the PGI example that used full petrophysical information.

5.2.5PGI with geological information¶

Our next goal in this example is to illustrate how geological information might be included. It is motivated by the fact that, in the two previous PGI results (using full and no petrophysical information), the cylindrical units display various size anomalies compared to the ground-truth and extend to different depths. Suppose information about the anomalous zone’s depth and thickness are provided. This additional information can be added to the inversion through local proportion parameters , by making them vary with depth. At depths where the anomalous bodies are expected, we set equal prior expectation of encountering any of the three units (so here for all three units for locations at depth between and ). At depths where only the background is expected, we set and . Those proportions are summarized in table 1. We used this prior information in a new PGI, using the petrophysical distribution recovered from the PGI with no mean information as .

Table 1:Proportions of the GMM as a function of depth to include geological information. Legend: Bckgrd: background; cond.: conductive unit; res.: resistive unit.

| Depth range (m) | |||

| 1 | 0 | 0 | |

| 1 | 0 | 0 |

The recovered model (Fig. 11j) is similar to the PGI with no petrophysical information except the anomalies are now restricted to the right depths. Comparing the two learned distributions, with and without depth information, we notice that the recovered conductivity values from the PGI model with geological information are closer to the ground-truth than the conductivities recovered from the PGI with no petrophysical or geological information (Fig. 11k). The convergence of the algorithm is similar to the previous PGIs (Fig. 11l).

5.3Using PGI to reduce ambiguity: a field example¶

In any inversion the final result is affected by many terms and parameters in the objective function, but of particular interest here is the choice of reference model. In a Tikhonov inversion starting from different half-space reference models can lead to different solutions, and this complicates the interpretation. Some of the ambiguity might be reduced if more information is incorporated. The PGI approach allows us to add information, such as a desired number of geological units, that are otherwise difficult to use in a Tikhonov setting. Adding a constraint on the number of units we wish to recover, without incorporating other extensive geological or petrophysical information, can be enough to reduce the realm of possible models.

We illustrate this on a field frequency-domain EM dataset Ward & Hohmann, 1988 for saline water delineation. We begin by emphasizing the discrepancies seen in Tikhonov models using different half-space reference models. We then show that by using PGI and assuming an expected number of distinct units, that starting from different reference models leads to similar inversion results that have consistent petrophysical distributions and simplified structures. The lack of dependence upon the initial and initial reference models helps to build confidence in the final images.

5.3.1Setup¶

Figure 12:Bookpurnong survey area. The elevation of the survey area is represented as an overlaid colormap. The white arrows represent the water flow, both of the river and from the irrigation zone. Geographic system: WGS 84 / UTM 54S. Aerial images from Google Earth, 2019 DigitalGlobe.

The Bookpurnong irrigation District is part of the Riverland region of South Australia along the Murray river. The irrigation on the highland river bank has lead to the salinization of the floodplain soil and threatens to make the area inhospitable for vegetation (Fig. 12). The key issue is to determine if (and where) the fresh water river is charging the aquifer (called "losing stream", this is the healthy scenario), or if the saline aquifer is charging the river, and thus contaminating the floodplain soil ("gaining stream" scenario). To help the work of hydrogeologists, various frequency and time-domain electromagnetics surveys have been conducted to characterize saline zones which are diagnosed by an increase in electrical conductivity. High conductivities close to the surface will indicate a "gaining stream" scenario while low conductivities will be a sign of a "losing stream".

The Bookpurnong case study has been studied in previous publications Viezzoli et al., 2009Viezzoli et al., 2010Yang, 2017. Here we focus on the RESOLVE frequency domain dataset, flown in 2008, which covers an area of approximately by .

At each sounding, the RESOLVE system measures the real and imaginary parts of the induced magnetic field for 5 frequencies ranging from to . The survey consists of 1022 soundings, resulting in a total number of data of . For the geophysical noise levels, we use a standard-deviation of of the absolute value of the datum and add a floor value; this is consistent with previous studies.

5.3.2Inversion setup¶

To invert the RESOLVE dataset, we choose a laterally-constrained 1D approach similar to Viezzoli et al. (2008) using the EM1D module of the SimPEG package Heagy et al., 2017Kang et al., 2018. Each sounding is inverted in 1D using a mesh with 19 layers that extends to a depth of . The cells increase in size from at the surface to for the last layer. We use the thickness of the layers as weights in the regularization, which relates to an integral formulation of the regularizer Oldenburg & Li, 2005.

For each Tikhonov and PGI approach, we run two separate inversions using () and () models respectively as reference models (thus four inversions are carried out). The reference models are also used as initial models . The reference model stays the same throughout the Tikhonov inversions while the PGI algorithm updates it.

5.3.3Tikhonov inversions¶

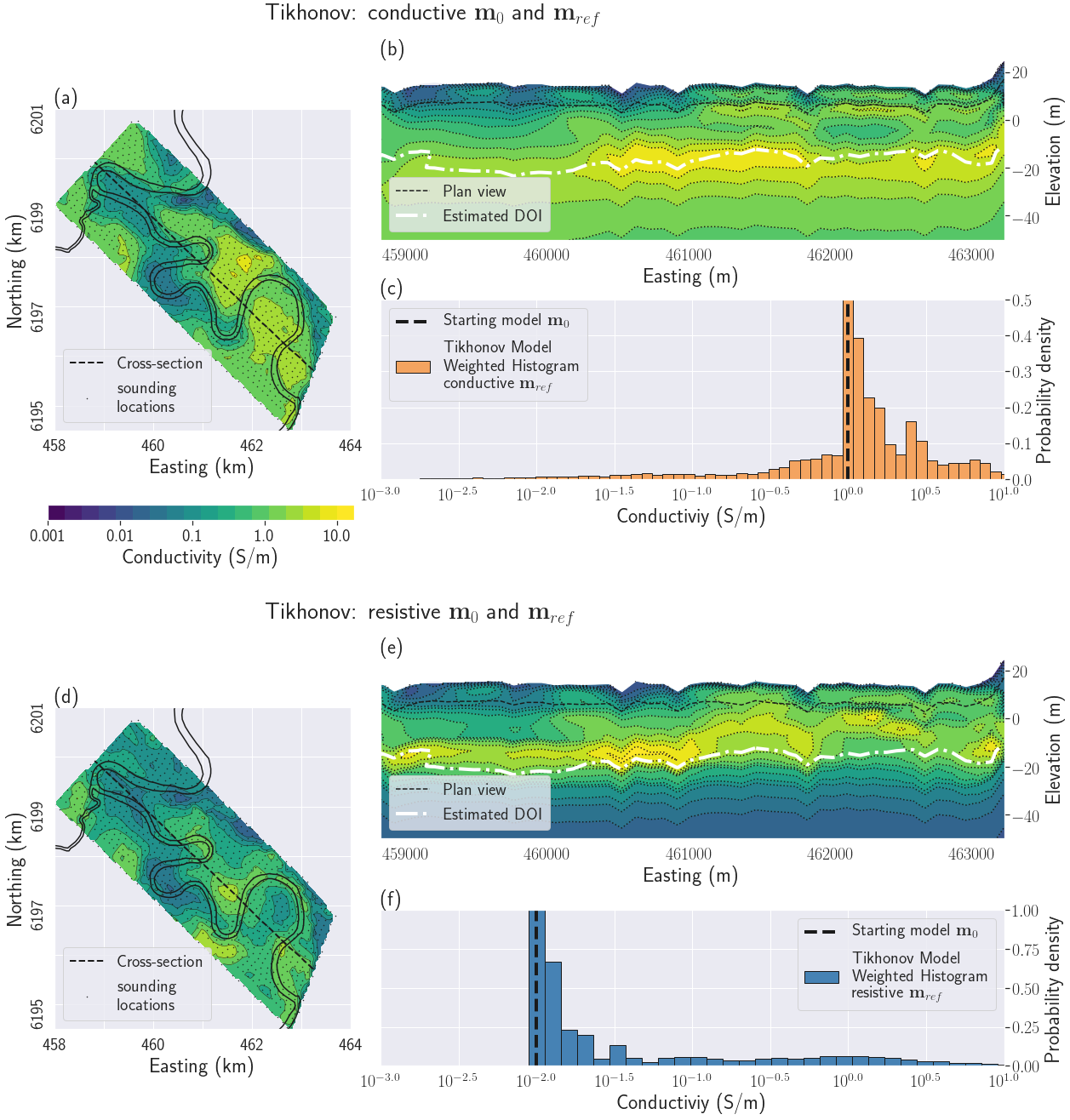

Figure 13:Tikhonov results with two different reference models. All figures share the same color scale for conductivity. Figs (a), (b) and (c) show the results starting from a conductive half-space. Figs (d), (e) and (f) show the result starting from a resistive half-space. Figs (a) and (d) display the recovered geophysical models on a plan view at a depth of 8.5 m. Figs (b) and (e) display a cross-section of the recovered geophysical models. Depth of investigation (DOI) evaluated using the method presented in Oldenburg & Li (1999). Figs (c) and (f) display the histogram of the recovered model for each inversion.

The results of the Tikhonov inversions using two different reference models are summarized in Fig. 13. Both Tikhonov models (Figs 13a and d) detect the river at the surface as a resistive feature; it is also visible where the cross-section crosses the river near ,, 460.5, 461, 461.8 and 462.5 km on Figs13(b) and (e). Both models also pick up highly conductive features at depth and the laterally-constrained regularization helps ensure horizontal continuity. The ranges of conductivity are similar to those observed in boreholes Holland et al., 2008.

In the north-west part of the area, the recovered conductivity models are consistent and transition smoothly from resistive at the surface to conductive at depth. In the south-east part of the area, more discrepancies in the two models are visible (see section 5.3.5 for a quantification of the discrepancies). On the plan views (Figs 13a and d), the overall recovered conductivities in the biggest bend in the river are an order of magnitude different between the two inversions. Inconsistent pocket-like structures are seen in both inversions in the cross-sections (Figs13b and e). Both cross-sections however show that the conductive layer is closer to the surface in the South-East than in the North-West.

5.3.4PGI¶

We now apply the PGI approach without providing specific petrophysical information; only the number of units is specified. We show that similar geophysical models and petrophysical distributions are obtained even though the inversions are carried out with different initial reference models.

As we did for the Tikhonov inversions, we now run two inversions starting from uniform () and () models respectively, used as initial and initial reference models. For the conductive and resistive reference model cases respectively, both the Tikhonov and PGI approaches start with the same objective function; the models after the first iteration are thus identical.

For the prior petrophysical distribution, we do not specify any prior value for the mean conductivity values nor the proportions; their respective confidence parameters are thus set to zero. We choose variances of . We set the confidences in the prior covariances to unity. Our goal with this choice is to recover a model with distinct units but still reasonably smooth.

A critical point here is to choose the number of distinct units we want to recover. We assume that there are three units, as this matches our interpretation goal of distinguishing freshwater from salinized water, with an additional transition zone. This adds an additional constraint on the models we want to recover.

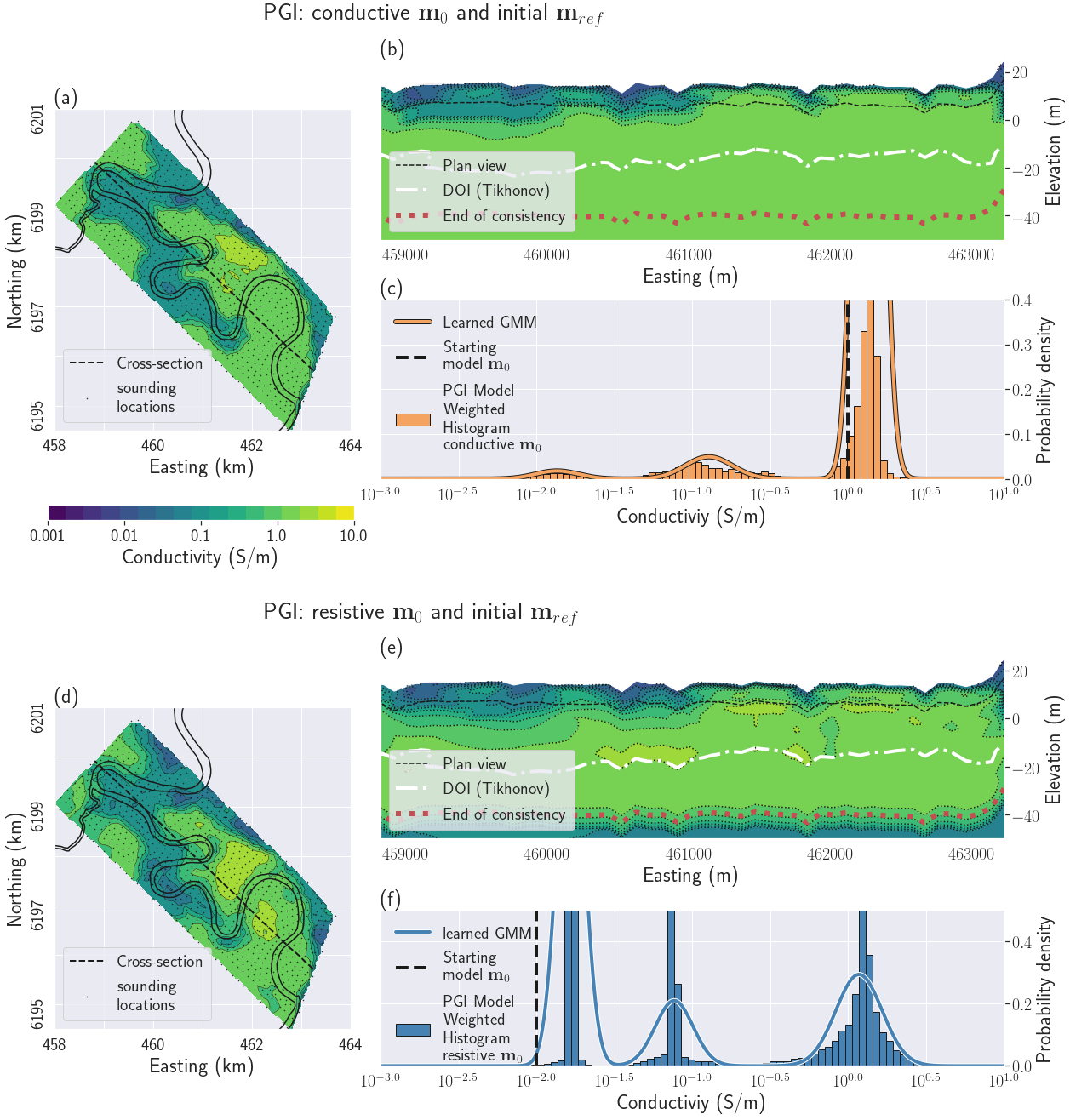

Figure 14:PGI results with two different initial and initial reference models. All figures share the same color scale for conductivity. Figs (a), (b) and (c) show the results starting from a conductive half-space. Figs (d), (e) and (f) show the results starting from a resistive half-space. Figs (a) and (d) display the recovered geophysical models on a plan view at a depth of 8.5 m. Figs (b) and (e) display a cross-section of the recovered geophysical models. Figs (c) and (f) display the histogram of the recovered model and the learned petrophysical distribution for each inversion.

The PGI results are compiled in Fig. 14. All PGI have reached their geophysical and petrophysical misfits. The recovered models (Figs 14a and 14d) are more consistent with each other than those in the Tikhonov case; we will discuss this later in section 5.3.5.

Counterintuitively, by clustering the histograms we actually smoothed the resulting model by forcing more homogeneity inside each distinct unit. Neither cross-section (Figs 14b and e) shows the pocket-like structure in the south-east end of the profile that were observed in the Tikhonov inversions; it indicates that those features were not robust. The high conductivity layer is closer to the surface in the South-East than in the North-West in both models.

The histograms and recovered petrophysical distributions (Figs 14c and f) display several interesting characteristics. First we recover three distinct units that can be interpreted as fresh, transition and saline zones. The learned means of the clusters are very similar between the two inversions. We notice that in the case of the resistive initial models, the resistive unit conductivity has been corrected to about (). The same phenomenon happens for the conductive initial models case, with a corrected conductive unit conductivity value of (). This behavior was noticed in the DC example (section 5.2) when starting from a biased background value. The major difference between the GMM for the two models is the variance and proportion of the most resistive and most conductive units. This is due to the volume of the region in the padding zones that are outside the region of influence of the data. The values of conductivity in those regions stays close the cluster mean which is closest to the conductivity of the initial reference model.

5.3.5PGI - Tikhonov comparison¶

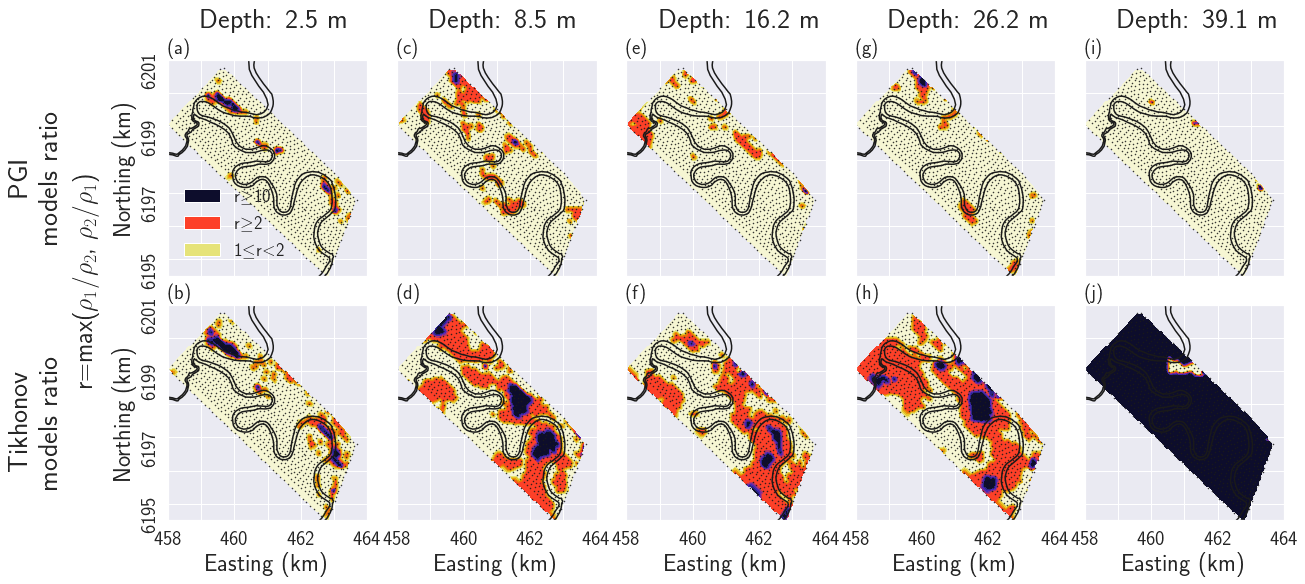

In addition to the removal of unnecessary structure mentioned earlier, the models recovered with the PGI approach display more consistent subsurfaces features than do the Tikhonov models. To quantify the similarities between models within the same methodology but with different initial and reference models, we adopt an approach analogous to the depth of investigation (DOI) estimation presented in Oldenburg & Li (1999). For the two Tikhonov and the two PGI conductivity models respectively, which for clarity in the notation are referred to in the following equation as electrical resistivity and , we computed their maximum ratio everywhere:

A ratio of unity indicates similar values of conductivity. The higher the value of , the more the two models differs. Fig. 15 shows the result of this two-by-two comparison. The two PGI models are quite consistent, with ratio values close to unity almost everywhere, except in a small area in the North-West. On the contrary the Tikhonov inversions show significant discrepancies, even in the near-surface, with values greater than 2 in most of the survey area. This is especially visible near the important bend in the river in the South-East where discrepancies of more than an order of magnitude () are observed (dark areas in Fig. 15). Fig 15(j) highlights when the Tikhonov inversions reached their DOI and where the final model is controlled mostly by the regularization. It is worth noticing that the PGI models are still consistent past the DOI estimated from the Tikhonov inversions (Figs 14b and e).

Figure 15:Consistency comparison between the two Tikhonov and the two PGI models, starting with conductive and resistive initial and reference models. The first row displays the ratio of the two PGI conductivity models at different depths. The bottom displays the same ratio for the two Tikhonov conductivity models. Depths of the plan views increase from left to right. Pale yellow means the two conductivity models differ by less than a factor 2. Orange highlights areas where the recovered conductivities differ by more than a factor 2. Dark purple emphasizes area where the two recovered conductivity models differ by more than a factor 10.

5.3.6Field example summary¶

We demonstrated that even in the near-surface above the depth of investigation the Tikhonov inversions had different structures when different initial and reference models were used. With the PGI approach, the two inversion lead to similar near-surface models that have consistent petrophysical distributions. The removal of structures, evidently not required by the data, and the simplification of the geology, provides a result that is more appealing to interpret geologically. Making a reasonable guess about the variances and the number of units were the crucial additional information required to achieve this. All four models point to a "losing stream" scenario in the North-West while three (both PGI models and the Tikhonov inversion with a conductive reference model) indicate a "gaining stream" in the South-East. This is coherent with the conclusions given in Viezzoli et al. (2009)Viezzoli et al. (2010) and Yang (2017). To further extend our analysis, ground-truthing the depth of the saturation salinization zone and adding it into the PGI as geological information, or adding petrophysical measurements, would be required.

6Discussion¶

We have presented a framework for carrying out a petrophysically and geologically guided inversion which allows the user to recover a quasi-geological rock model Li et al., 2019 and a petrophysical distribution in addition to the geophysical model. The framework has three main modules, each of which is formulated as a MAP estimate. The framework is flexible and goals, achieved by others and using different approaches, can be incorporated while the reverse is not true.

Carrying out an inversion that includes three separate sub-problems that communicate with each other, and for which two target misfits are to be achieved, is challenging. To address this we have provided a pseudocode as well as a flowchart in appendix 3. The important part of the procedure is the initialization step where the user must specify what is known, how to incorporate it, and what they want as an end product. The examples explore those various aspects as well as the convergence of the PGI approach.